Statistical Analysis And Reporting System Highlights

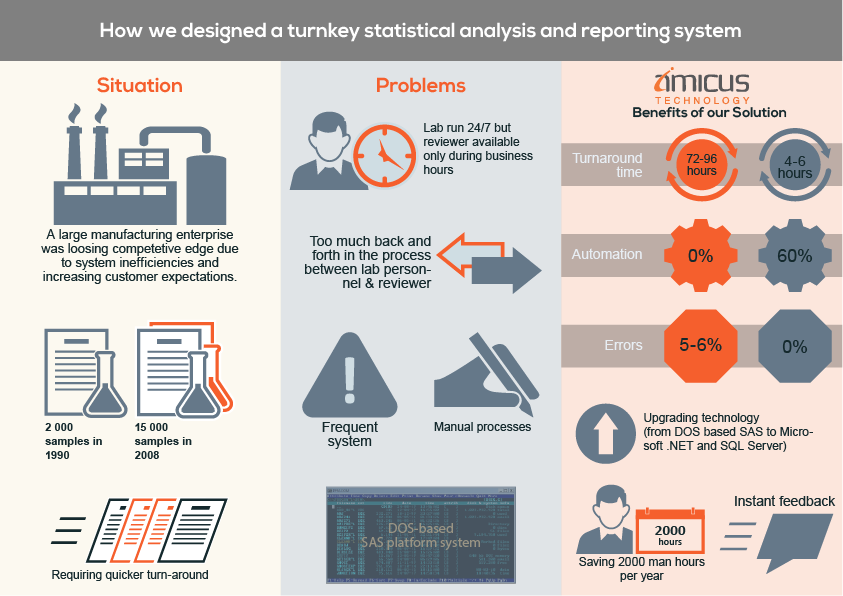

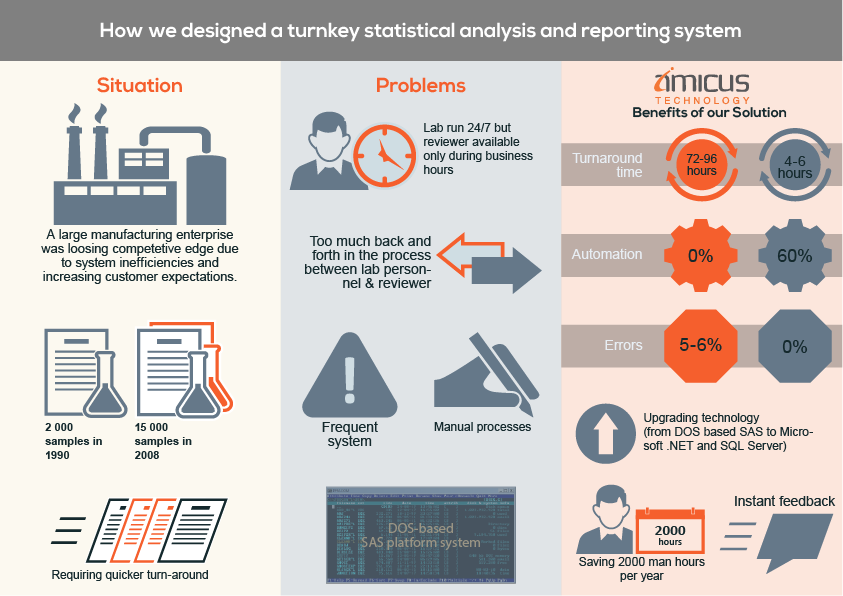

A large manufacturer of chemical catalysts ran a sales-incentive program that offered specialized sample analysis to oil refineries. The company promised the refineries a turnaround time of 48 hours, but inefficiencies in the existing data interfaces and manual analysis processes caused delays that resulted in extending the turnaround time to 96 hours. This led to a drop in customer service levels and affected sales. Amicus provided the company a complete turnkey automatic statistical analysis and reporting solution that led to reductions in turnaround time to just 6 hours, exceeding the customer expectations.

Business Situation

For years, the incentive program in place had been hugely successful in attracting new refineries to the client. In time however, the volume of sample requests had increased (starting at 2000 samples in the year 1990) and the systems and processes were no longer capable of managing the workflow. In 2008 alone, the lab analyzed 15000 samples, resulting in over 1 Million data points that was reported to about 2000 contacts in 400 refineries across the globe. This led to delays in the delivery of the reports and inconsistencies in the results. The delays started affecting the sales team’s efforts and the inaccuracies were a cause for concern since this was critical refinery-related data. Negative repercussions could be disastrous to the company’s image. The Sales Director approached Amicus to solve this problem.

Technical and Business Challenges

Refineries used to send samples to the client lab through courier services such as FedEx and UPS. Once the lab received the samples, it would log them into the laboratory management software system and enters the test results after sample testing and analysis (picking the best result based on multiple outputs). The lab used a hand-transcribed “card” result evaluation system. There were two technical service coordinators who reviewed the final results before sending them back to the refineries and would ask back to the lab for correction and clarifications in case of inconsistencies in the results.

Our Solution

In 2008, there were in excess of 1,000,000 results generated by the lab. With the reliance on manual-reviews, the possibility of human-error was very high. The lab had 12 lab analysts and chemists, 2 lab supervisors and 2 technical service coordinators to support this process. The system in use was a decade-old DOS-based SAS platform with very few adequately trained operators who could manage the system. Considering the 24/7 operations of the lab and huge amount of manual reviews, there was a considerable risk in the event that the operator became unavailable (for example, due to illness). In fact, one of the operators revealed to Amicus that she had not been on a vacation for more than 3 years, due to the critical nature of her job and the lack of an alternative solution. We decided to design that alternative.

- We analyzed the logics for the data reviews and decided to built most of them within the software with providing the ability to the administrators to easily configure the logic.

- We re-engineered the process of reviews and moved it from the end of the lab-cycle to the beginning i.e. at the first stage of sample testing through instant feedback and alerts through automatic reviews.

- The new application was web-based that leveraged an existing IT infrastructure, to conduct automated, real-time, and statistically informed reviews directly at the point of data-input. This meant that when the lab analysts entered data into the system, they would receive instant feedback based on a statistical analysis of the most relevant 50 data points from the same client or a similar sample.

- Based on highly sophisticated business logics, the system would automatically categorize samples for hold, repeat or reports it automatically to customers without any manual intervention.

Solution Benefits

Besides executing the project flawlessly, within time and budget, several improvements were made to the overall application infrastructure. We reduced the size of the database to 40% of its original size through data archiving and purging. The number of database jobs reduced from 322 to 104 through consolidation and we mitigated the risk of overlaps and improved the application performances by up to 40%. Some of the servers, which were running at 100% CPU during peak hours, were now consistently running well under limits. This was one of prime causes for frequent server failures and resolving it, removed any immediate need for more expensive hardware. There were 70-80% improvements in average run durations on almost all data transfer jobs. One of the major accomplishment was that we were able to reduce the application portfolio by archiving/cleanup of 29 applications and 30 databases along with retiring one application server. There was a significant reduction in the number of job failures, which meant less IT maintenance work and business disruptions. Existing servers that were running on a mix of Windows 2000/2003 and SQL2000/2005 were migrated to Windows2008/SQL2005. The technology stack was modernized and standardized. Source code for the programs running on the servers were documented along with the usernames and passwords, complete architecture diagrams of all servers and data flows and procedures to easily rebuild the complete application environment from scratch in the event of any server failure or disaster.